The latest models from Anthropic, Cohere, Meta,

Stability AI, and Amazon expand customers’ choice of

industry-leading models to support a variety of use cases

Model Evaluation on Amazon Bedrock helps

customers evaluate, compare, and select the best model for their

use case and business needs

Knowledge Bases for Amazon Bedrock makes it

even easier to build generative AI applications that use

proprietary data to deliver customized, up-to-date responses

Customers have more options to customize models

in Amazon Bedrock with fine-tuning support for Cohere Command, Meta

Llama 2, and Amazon Titan models, with Anthropic Claude coming

soon

With Agents for Amazon Bedrock, customers can

enable generative AI applications to plan and perform a wide

variety of multistep business tasks securely and privately

Guardrails for Amazon Bedrock helps customers

implement safeguards customized to their generative AI applications

and aligned with their responsible AI policies

Blueshift, dentsu, Druva, GoDaddy, INRIX,

MongoDB, OfferUp, Salesforce, SmartBots AI, and TTEC Digital are

among the customers and partners using Amazon Bedrock to harness

generative AI

At AWS re:Invent, Amazon Web Services, Inc. (AWS), an

Amazon.com, Inc. company (NASDAQ: AMZN), today announced Amazon

Bedrock innovations that expand model choice and deliver powerful

capabilities, making it easier for customers to build and scale

generative artificial intelligence (AI) applications customized to

their businesses. Amazon Bedrock is a fully managed service that

offers easy access to a choice of industry-leading large language

models and other foundation models from AI21 Labs, Anthropic,

Cohere, Meta, Stability AI, and Amazon, along with a broad set of

capabilities that customers need to build generative AI

applications—simplifying development while supporting privacy and

security. These announcements further democratize access to

generative AI by empowering customers with even more choice of

industry-leading models and new capabilities to evaluate them,

simplifying how they customize models with relevant and proprietary

data, supplying tools to automate the execution of complex tasks,

and equipping customers with safeguards to build and deploy

applications responsibly. Together, these new additions to Amazon

Bedrock transform how organizations of all sizes and across all

industries can use generative AI to spark innovation and reinvent

customer experiences. To get started with Amazon Bedrock, visit

aws.amazon.com/bedrock.

“Generative AI is poised to be the most transformational

technology of our time, and we are inspired by how customers are

applying it to new opportunities and tackling business challenges,”

said Dr. Swami Sivasubramanian, vice president of Data and AI at

AWS. “As customers incorporate generative AI into their businesses,

they turn to Amazon Bedrock for its choice of leading models,

customization features, agent capabilities, and enterprise-grade

security and privacy in a fully managed experience. With even more

tools at their fingertips, customers are using Amazon Bedrock to

leverage the full potential of generative AI to reimagine user

experiences, reinvent their businesses, and accelerate their

generative AI journeys.”

Organizations want to use generative AI for a wide variety of

use cases—like generating productivity gains, driving innovative

user experiences, and reimagining work—but generative AI is

evolving rapidly, with new options and innovations happening daily.

With so much fluidity in this space, customers’ ability to adapt is

arguably the most valuable tool of all. Organizations need to be

able to experiment, deploy, iterate, and pivot using the latest and

greatest models available, and be ready to immediately embrace what

comes tomorrow. To address these challenges, AWS developed Amazon

Bedrock to make building with—and moving between—a range of models

as easy as an API call, to put the latest techniques for model

customization in the hands of all developers, and to keep customers

secure and their data private. This is why customers such as Alida,

Automation Anywhere, Blueshift, BMW Group, Clariant, Coinbase, Cox

Automotive, dentsu, Druva, Genesys, Gilead, GoDaddy, Hellmann

Worldwide Logistics, INRIX, KONE, LexisNexis Legal &

Professional, Lonely Planet, NatWest, Nexxiot, OfferUp, Omnicom,

the PGA TOUR, Proofpoint, Salesforce, Siemens, Takenaka

Corporation, and Verint have turned to Amazon Bedrock to help them

harness the power of generative AI for their organizations. Today’s

announcement introduces new models and capabilities that will make

it even easier for customers to build and scale generative AI

applications.

The latest models from Anthropic, Cohere, Meta, and Stability

AI, as well as additions to the Amazon Titan family, expand model

choice for customers

No single model is ideal for every use case. Models vary across

capabilities, price, and performance. Customers need easy access to

a variety of model choices, so they can try out different models,

switch between them, and combine the best models for their needs.

With Amazon Bedrock, customers can drive rapid innovation with the

latest versions of models, including the newly available Anthropic

Claude 2.1 and Meta Llama 2 70B, and the recently available Cohere

Command Light, Cohere Embed English, Cohere Embed multilingual,

Meta Llama 2 13B, and Stability AI Stable Diffusion XL 1.0—all

accessible via an API. In addition to Amazon Titan Text Embeddings

and Amazon Titan Text models (now generally available), AWS is

introducing Amazon Titan Image Generator and Amazon Titan

Multimodal Embeddings to give customers even more choice and

flexibility to build generative AI applications with models.

Exclusive to Amazon Bedrock, Amazon Titan models are created and

pre-trained by AWS on large and diverse datasets for a variety of

use cases, with built-in support for the responsible use of AI. And

Amazon indemnifies customers against claims that generally

available Amazon Titan models or their outputs infringe on

third-party copyrights.

- Anthropic’s Claude 2.1 in Amazon Bedrock: Anthropic, an

AI safety and research company that builds reliable, interpretable,

and steerable AI systems, has brought Claude 2.1, the latest

version of their language model, to Amazon Bedrock. Claude 2.1

offers a 200K token context window and improved accuracy over long

documents. Customers can now process text heavy documents like

financial statements and internal datasets, and Claude 2.1 can

summarize, perform Q&A, or contrast documents, and much more.

Anthropic reports that Claude 2.1 has made significant gains in

honesty with a 2x decrease in false statements compared to their

previous model.

- Meta Llama 2 70B in Amazon Bedrock: Llama 2 is the next

generation of language models by Meta. Llama 2 was trained on 40%

more data than Llama 1 and has double the context length. The Llama

2 70 billion-parameter model is now available in Amazon Bedrock, in

addition to the recently announced Llama 2 13 billion-parameter

model. Built on top of the pre-trained Llama model, Llama 2 is

optimized for dialog use cases through fine-tuning with instruction

datasets and more than 1 million human annotations. The models

perform competitively against multiple external benchmarks,

including reasoning, coding, proficiency, and knowledge tests, and

offer a compelling combination of price and performance in Amazon

Bedrock.

- New Amazon Titan Image Generator available in preview:

Amazon Titan Image Generator helps customers in industries like

advertising, ecommerce, and media and entertainment produce

studio-quality, realistic images or enhance existing images using

natural language prompts, for rapid ideation and iteration on large

volumes of images and at low cost. The model can understand complex

prompts and generate relevant images with accurate object

composition and limited distortions, reducing the generation of

harmful content and mitigating the spread of misinformation.

Customers can use the model in the Amazon Bedrock console either by

submitting a natural language prompt to generate an image or by

uploading an image for automatic editing, before configuring the

dimensions and specifying the number of variations the model should

generate. To edit, customers can isolate parts of an image to add

or replace details (e.g., inserting a surfboard into a beach scene

or replacing mountains with a forest in the background of a car

advertisement), or they can extend an image’s borders with

additional details in the same style as the original. Building on

the commitments AWS made earlier this year at the White House,

Amazon Titan applies an invisible watermark to all images it

generates to help reduce the spread of misinformation by providing

a discreet mechanism to identify AI-generated images and to promote

the safe, secure, and transparent development of AI technology. AWS

is among the first model providers to widely release built-in

invisible watermarks that are integrated into the image outputs and

are designed to be resistant to alterations.

- New Amazon Titan Multimodal Embeddings generally

available: Amazon Titan Multimodal Embeddings helps customers

power more accurate and contextually relevant multimodal search and

recommendation experiences for end users. The model converts images

and short text into embeddings—numerical representations that allow

the model to easily understand semantic meanings and relationships

among data— which are stored in a customer’s vector database. End

users can submit search queries using any combination of image and

text prompts. The model will generate embeddings for the search

query and match them to the stored embeddings to produce more

accurate and relevant search and recommendation results for end

users. For example, a stock photography company with hundreds of

millions of images can use the model to power its search

functionality, so users can search for images using a phrase,

image, or a combination of image and text (e.g., “show me images

similar to the provided image, but with sunny skies”). By default,

the model generates vectors that are well suited for search

experiences that require a high degree of accuracy and speed.

However, customers can also generate smaller dimensions to optimize

for speed and performance. Amazon Titan Multimodal Embeddings joins

the existing Amazon Titan Text Embeddings model, which is used to

convert text input like single words, phrases, or even large

documents into embeddings for use cases like search and

personalization.

New capability helps customers efficiently evaluate, compare,

and select the best model for their use case and business

needs

Today, organizations have a wide range of model options to power

their generative AI applications. To strike the right balance of

accuracy and performance for their use case, organizations must

efficiently compare models and find the best option based on their

preferred metrics. To compare models, organizations must first

spend days identifying benchmarks, setting up evaluation tools, and

running assessments, all of which requires deep expertise in data

science. Furthermore, these tests are not useful for evaluating

subjective criteria (e.g., brand voice, relevance, and style) that

requires judgment through tedious, time-intensive, human-review

workflows. The time, expertise, and resources required for these

comparisons—for every new use case—make it difficult for

organizations to choose the optimal model for a task, limiting

their use of generative AI.

Now available in preview, Model Evaluation on Amazon Bedrock

helps customers evaluate, compare, and select the best models for

their specific use case, using either automatic or human

evaluations. In the Amazon Bedrock console, customers choose the

models they want to compare for a given task, such as

question-answering or content summarization. For automatic

evaluations, customers select predefined evaluation criteria (e.g.,

accuracy, robustness, and toxicity) and upload their own testing

dataset or select from built-in, publicly available datasets. For

subjective criteria or nuanced content requiring sophisticated

judgment, customers can easily set up human-based evaluation

workflows with just a few clicks. These workflows leverage a

customer’s in-house workforce, or use a managed workforce provided

by AWS, to evaluate model responses. During human-based

evaluations, customers define use case-specific metrics (e.g.,

relevance, style, and brand voice). Once customers finish the setup

process, Amazon Bedrock runs evaluations and generates a report, so

customers can easily understand how the model performed across key

criteria and can make optimal tradeoffs and quickly select the best

models for their use cases.

New model customization capabilities help customers make the

most of their data, privately and securely, on AWS

Organizations want to maximize the value of their rich data

sources to deliver remarkable user experiences—at scale—that are

uniquely customized to reflect the company’s style, voice, and

services. New, purpose-built capabilities available in Amazon

Bedrock help customers personalize models privately and securely

with their own data to build differentiated generative AI-powered

applications.

- Knowledge Bases for Amazon Bedrock customizes model

responses with contextual and relevant company data:

Organizations want to supplement existing models with proprietary

data to create more relevant and accurate responses. To equip the

model with up-to-date information, organizations turn to retrieval

augmented generation (RAG), a technique that allows customers to

customize a model’s responses by augmenting prompts with data from

multiple sources, including document repositories, databases, and

APIs. Now generally available, Knowledge Bases for Amazon Bedrock

securely connects models to proprietary data sources for RAG to

deliver more accurate, context-specific responses for use cases

like chatbots and question-answering systems. Knowledge bases are

fully managed, so customers simply point to the location of their

data. Then knowledge bases fetch the text documents and save the

data to a vector database or set one up on the customer’s behalf.

When a user query comes in, Amazon Bedrock orchestrates RAG by

fetching text needed to augment a prompt, sending the prompt to the

model, and returning the response. Knowledge Bases for Amazon

Bedrock supports databases with vector capabilities, including

Amazon OpenSearch, and other popular databases like Pinecone and

Redis Enterprise Cloud, with Amazon Aurora and MongoDB coming

soon.

- Cohere Command, Meta Llama 2, and Amazon Titan models can

now be fine-tuned in Amazon Bedrock, with support for Anthropic’s

Claude 2 coming soon: In addition to RAG, organizations can

also leverage fine-tuning to further train the model on a specific

task (e.g., text generation), using labeled datasets to adapt the

model’s parameters to their business, and extending its knowledge

with the lexicon and terminology used by the organization and end

users. For example, a retail customer could fine-tune a model on a

dataset of product descriptions to help it understand the brand

style and produce more accurate descriptions for the website.

Amazon Bedrock now supports fully managed fine-tuning for Cohere

Command and Meta Llama 2, along with Amazon Titan Text Express,

Amazon Titan Text Lite, Amazon Titan Multimodal Embeddings, and

Amazon Titan Image Generator (in preview), so customers can use

labeled datasets to increase model accuracy for specific tasks.

Additionally, AWS customers will soon be able to fine-tune Claude

2’s performance with their data sources. To fine-tune a model,

customers start by selecting the model and using Amazon Bedrock to

make a copy. Customers then point to labeled examples in Amazon

Simple Storage Service (Amazon S3). Amazon Bedrock incrementally

trains the model (augments the copied model with the new

information) on these examples, and the result is a private, more

accurate fine-tuned model that delivers more relevant, customized

responses. Customer data is encrypted in transit and at rest, so

all valuable customer data remains secure and private. AWS and

third-party model providers will not use any inputs or outputs from

Amazon Bedrock to train their base models.

With Agents for Amazon Bedrock, generative AI applications

can help execute multistep tasks using company systems and data

sources

While models are effective at conversing and creating new

content, they deliver more value if equipped to take actions, solve

problems, and interact with a range of systems to complete

multistep tasks (e.g., booking travel or ordering replacement

parts). However, this requires custom integrations to connect

models with company data sources, APIs, and internal and external

systems. Developers must write code to orchestrate the interactions

between models, systems, and the user, so the application can

execute a series of API calls in a logical order. To connect the

model with data sources, developers must implement RAG, so the

model can customize its responses to the task. Finally, developers

must provision and manage the requisite infrastructure, as well as

establish policies for data security and privacy. These steps are

time-consuming and require expertise, slowing the development of

generative AI applications.

Now generally available, fully managed Agents for Amazon Bedrock

enables generative AI applications to execute multistep tasks using

company systems and data sources. Agents can plan and perform most

business tasks, such as answering questions about product

availability or taking orders. Customers can create an agent using

a simple setup process, first selecting the desired model, writing

a few instructions in natural language (e.g., “you are a cheerful

customer service agent” and “check product availability in the

inventory system”) and providing access to the company’s enterprise

systems and knowledge bases. Agents automatically analyze the

request and break it down into a logical sequence, using the

model’s reasoning capabilities to determine the information needed.

The agent then takes action by identifying the APIs to call and

deciding when to call them to fulfill the request. Agents also

retrieve needed information from proprietary data sources to

provide accurate and relevant responses. Agents securely and

privately perform this process in the background each time,

relieving customers from having to engineer prompts, manage the

session context, or orchestrate systems manually. With Agents for

Amazon Bedrock, customers can improve the accuracy and speed of

development of their generative AI applications.

With Guardrails for Amazon Bedrock, customers can implement

safeguards across models based on application requirements and

responsible AI policies

Organizations recognize the need to manage interactions within

generative AI applications for a relevant and safe user experience.

While many models use built-in controls to filter undesirable and

harmful content, organizations want to further customize

interactions to remain on topics relevant to their business, align

with company policies, and adhere to responsible AI principles. For

example, a bank might want to configure its online assistant to

refrain from providing investment advice, avoid queries about

competitors, and limit harmful content. As another example, after a

customer service call, personally identifiable information (PII)

may need to be redacted from the call summary. Organizations may

need to change models, use multiple models, or replicate policies

across applications, and they want a simple way to consistently

deploy their preferences across all these areas simultaneously.

Deep expertise is required to build custom protection systems with

these kinds of safeguards and integrate them into applications, and

the processes can take months. Organizations want a streamlined way

to enforce key policies and rules in generative AI applications to

deliver relevant user experiences and support safer use of the

technology.

Now available in preview, Guardrails for Amazon Bedrock empowers

customers to implement safeguards for generative AI applications

that are customized to their use cases and responsible AI

principles, enhancing the safety and privacy of user interactions.

Guardrails drive consistency in how models in Amazon Bedrock

respond to undesirable and harmful content within applications.

Customers can apply guardrails to all large language models in

Amazon Bedrock, as well as to fine-tuned models and in combination

with Agents for Amazon Bedrock. To create a guardrail in the Amazon

Bedrock console, customers start with natural language descriptions

to define the denied topics within the context of their

application. Customers can also configure thresholds across hate

speech, insults, sexualized language, and violence to filter out

harmful content to their desired level. In early 2024, customers

will also be able to redact PII in models’ responses, set profanity

filters, and provide a list of custom words to block interactions

between users and models. Guardrails automatically evaluate both

user queries and model responses to detect and help prevent content

that falls into restricted categories. Customers can create

multiple guardrails to support different use cases and apply the

same guardrails across multiple models. Guardrails for Amazon

Bedrock empowers customers to innovate safely by providing a

consistent user experience and standardizing safety and privacy

controls across generative AI applications.

Blueshift provides brands with marketing automation and customer

data platforms to deliver personalized, customer engagement across

all communication channels and devices. “Product catalogs are

rapidly evolving with new content being changed every minute, and

we need to continuously update our embeddings to ensure

recommendations for brand audiences remain relevant,” said Manyam

Mallela, co-founder and chief AI officer at Blueshift. “Amazon

Titan Multimodal Embeddings in Amazon Bedrock is outperforming

older models from other providers that we used, offering more

nuanced and contextually relevant recommendations without complex

feature engineering. Our team has seen a 10% performance

improvement using Amazon Titan Multimodal Embeddings. With the

robust infrastructure, security, and collaboration offered by AWS,

Blueshift is poised to seamlessly integrate cutting-edge

embeddings, ensuring that our recommendation solutions remain

state-of-the-art and lead to improved audience engagement.”

Dentsu is one of the world's largest providers of integrated

marketing and technology services. “We work at the convergence of

marketing, technology, and consulting to drive people-centered

transformations for brands that want to shape society for the

better, and generative AI is changing our ability to deliver at

scale and speed for clients, augmenting, not replacing, our

72,000-strong team around the world,” said Brian Klochkoff,

executive vice president of Innovation & Emerging Technologies

at dentsu. “Specifically, Amazon Bedrock gives us the enterprise

control and ease-of-use to deploy third-party models for

decentralized usage across our product and engineering teams. This

allows our teams to innovate with the latest and greatest

generative AI advancements in a safe and responsible space, while

inventing cutting-edge opportunities for clients.”

Druva enables cyber, data, and operational resilience for every

organization with their leading, at-scale software-as-a-service

(SaaS) solution. “It takes time and effort to manually build

architecture that incorporates real-time and regularly changing

data into applications, so we wanted a way to automate this

process,” said David Gildea, vice president of Product, Generative

AI at Druva. “We built our new service Dru—an AI co-pilot that IT

teams can use to access company information and perform actions in

natural language—in Amazon Bedrock because it provides fully

managed and secure access to an array of foundation models. Next,

we plan to integrate agents and knowledge bases into Dru to simply

implement and automate RAG and improve end-user experiences, which

we expect will lead to 70% more accurate responses, 50% faster

response times, and 50% lower costs compared to similar models on

other platforms. With Agents and Knowledge Bases for Amazon

Bedrock, we can add new capabilities, like support ticket creation,

with increased velocity and without having to re-engineer

Dru—enabling us to deliver the latest generative AI-powered

solutions to our customers.”

GoDaddy is a leading domain registrar, commerce, and web hosting

company serving more than 20 million customers. “Everyday

entrepreneurs—from bakers to plumbers—use GoDaddy to establish

their businesses online, and we are heavily leaning into generative

AI as it can help these entrepreneurs compete with and become the

next big brand,” said Travis Muhlestein, chief data and analytics

officer at GoDaddy. “We have long worked with AWS because of their

dedication to customers, history of democratizing cloud services,

and current mission of making generative AI accessible to

businesses of all sizes. Amazon Bedrock plays a critical role in

our AI platform, and we plan to use Amazon Titan Text Express to

accelerate internal development patterns. We are especially excited

to test Amazon Titan Image Generator because it has the potential

to help small businesses create professional marketing assets that

solidify their brand identities and can propel their businesses

exponentially.”

INRIX is a global leader in connected car services and

transportation analytics. “The insights we provide our customers

are informed by a vast amount of data, so it is critical that we

pursue the most efficient and effective generative AI solutions to

drive data analysis through our new causality platform,” said Bryan

Mistele, chief executive officer at INRIX. “Amazon Bedrock's model

evaluation capability simplifies the process of identifying the

right foundation model for our needs by aggregating key metrics,

expediting our selection from days to minutes. For content needing

sophisticated judgement, we can use our own employees at scale to

evaluate the model's responses and ensure they accurately reflect

our domain and the brand voice of our chatbot application. With a

visual and comprehensive resulting report, not only does the

capability boost our confidence that we have selected the right

model for the task, but it helps us focus instead on delivering

innovative services to move people, cities, and businesses

forward.”

MongoDB's mission is to empower innovators to create, transform,

and disrupt industries by unleashing the power of software and

data. “More and more customers across industries want to take

advantage of generative AI to build next-generation applications,

but many of them are concerned about data privacy and ensuring the

accuracy of the outputs from AI-powered systems,” said Sahir Azam,

chief product officer at MongoDB. “To meet customers where they

are, we made MongoDB Atlas available as a knowledge base for Amazon

Bedrock, so our joint customers can securely build generative AI

applications with their operational data to create personalized

experiences with the trust and accuracy their end users expect.

Through this integration, customers can access industry-leading

foundation models and use their data processed by MongoDB Atlas

Vector Search to create applications that deliver more relevant

outputs with the right context. Leveraging the data privacy best

practices built into Knowledge Bases for Amazon Bedrock, customers

can spend less time on the operational overhead of generative AI

and focus more on deploying the technology to provide highly

engaging end-user experiences on AWS.”

OfferUp is one of the largest mobile marketplaces for local

buyers and sellers in the U.S. and is changing the way people

transact in their communities by providing a uniquely simple and

trusted experience. “With millions of listings posted daily, it is

important that we continually improve our personalized search and

recommendation experiences for our users,” said Melissa Binde,

chief technology officer at OfferUp. “To achieve this goal, we are

experimenting with Amazon Titan Multimodal Embeddings, with the aim

of revolutionizing local commerce through cutting-edge semantic

search capabilities. During an initial evaluation with the new

multimodal model, we have observed substantial improvement in

relevance recall for keyword searches. This advancement will

significantly expedite successful matches, benefiting both our

buyers and sellers.”

Salesforce is a leading AI customer relationship management

(CRM) platform, driving productivity and trusted customer

experiences powered by AI, CRM, and data. “AI is an integral part

of our commitment to help companies connect with their customers in

new and personalized ways,” said Kaushal Kurapati, senior vice

president of Product at Salesforce. “Amazon Bedrock is a key

component of our open ecosystem approach to models, seamlessly

grounding models with customer data, and integrating them into

workflows across Salesforce. And with the addition of new

evaluation capabilities, comparing foundation models across

multiple criteria—including qualitative aspects such as

friendliness, style, and brand relevance—will make operationalizing

models easier and faster than ever.”

SmartBots AI helps enterprises build their own conversational

chatbots to deliver elevated customer support and sales

experiences. “It is critical that our customers can trust the

responses of the conversational chatbots they are building with

SmartBots AI, as these responses represent their brands and will be

used by their employees and clients,” said Jaya Prakash Kommu,

co-founder and chief technology officer at SmartBots AI. “Because

our chatbot development service is powered by Amazon Bedrock,

customers’ chatbots inherit AWS data safety and privacy best

practices. Their chatbots will also have access to Guardrails for

Amazon Bedrock to set, control, and avoid inappropriate and

unwanted content in user prompts and responses that their chatbots

generate, so users have safe and brand-adhering experiences. Our

customers are excited for this safety feature to build and use

generative AI responsibly.”

TTEC Digital uses AI to improve customer experiences through

cutting-edge contact center technologies. “Our clients are always

looking for ways to shorten contact center response times for their

customer interactions, so we are building a generative AI-powered

solution using Amazon Bedrock to partially automate information

gathering and response generation,” said Dave Seybold, chief

executive officer at TTEC Digital. “We will soon be deploying

Amazon Titan Text Express within our clients’ enterprise contact

center environments to enable rapid retrieval of customer data

through simple prompting, helping their contact center

representatives provide fast, more informed responses. We predict

this solution will increase customer satisfaction, decrease average

call times, reduce post-call workload for representatives, and

lower labor costs. With Amazon Titan Text Express, our clients’

contact center representatives will be empowered to address a

broader range of customer issues and extend the transformative

advantage of generative AI to their customers.”

About Amazon Web Services

Since 2006, Amazon Web Services has been the world’s most

comprehensive and broadly adopted cloud. AWS has been continually

expanding its services to support virtually any workload, and it

now has more than 240 fully featured services for compute, storage,

databases, networking, analytics, machine learning and artificial

intelligence (AI), Internet of Things (IoT), mobile, security,

hybrid, virtual and augmented reality (VR and AR), media, and

application development, deployment, and management from 102

Availability Zones within 32 geographic regions, with announced

plans for 15 more Availability Zones and five more AWS Regions in

Canada, Germany, Malaysia, New Zealand, and Thailand. Millions of

customers—including the fastest-growing startups, largest

enterprises, and leading government agencies—trust AWS to power

their infrastructure, become more agile, and lower costs. To learn

more about AWS, visit aws.amazon.com.

About Amazon

Amazon is guided by four principles: customer obsession rather

than competitor focus, passion for invention, commitment to

operational excellence, and long-term thinking. Amazon strives to

be Earth’s Most Customer-Centric Company, Earth’s Best Employer,

and Earth’s Safest Place to Work. Customer reviews, 1-Click

shopping, personalized recommendations, Prime, Fulfillment by

Amazon, AWS, Kindle Direct Publishing, Kindle, Career Choice, Fire

tablets, Fire TV, Amazon Echo, Alexa, Just Walk Out technology,

Amazon Studios, and The Climate Pledge are some of the things

pioneered by Amazon. For more information, visit amazon.com/about

and follow @AmazonNews.

View source

version on businesswire.com: https://www.businesswire.com/news/home/20231129022048/en/

Amazon.com, Inc. Media Hotline Amazon-pr@amazon.com

www.amazon.com/pr

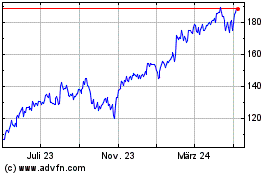

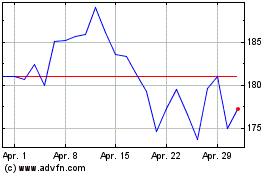

Amazon.com (NASDAQ:AMZN)

Historical Stock Chart

Von Jun 2024 bis Jul 2024

Amazon.com (NASDAQ:AMZN)

Historical Stock Chart

Von Jul 2023 bis Jul 2024