Snowflake’s AI Research Team, in collaboration

with the open source community, launches a Massive LLM Inference

and Fine-Tuning System Stack — establishing a new state-of-the-art

solution for open source inference and fine-tuning systems for

multi-hundred billion parameter models like Llama 3.1 405B

Snowflake (NYSE: SNOW), the AI Data Cloud company, today

announced that it will host the Llama 3.1 collection of

multilingual open source large language models (LLMs) in Snowflake

Cortex AI for enterprises to easily harness and build powerful AI

applications at scale. This offering includes Meta’s largest and

most powerful open source LLM, Llama 3.1 405B, with Snowflake

developing and open sourcing the inference system stack to enable

real-time, high-throughput inference and further democratize

powerful natural language processing and generation applications.

Snowflake’s industry-leading AI Research Team has optimized Llama

3.1 405B for both inference and fine-tuning, supporting a massive

128K context window from day one, while enabling real-time

inference with up to 3x lower end-to-end latency and

1.4x higher throughput than existing open source solutions.

Moreover, it allows for fine-tuning on the massive model using just

a single GPU node — eliminating costs and complexity for developers

and users — all within Cortex AI.

This press release features multimedia. View

the full release here:

https://www.businesswire.com/news/home/20240723098720/en/

Snowflake Teams Up with Meta to Host and

Optimize New Flagship Model Family in Snowflake Cortex AI (Graphic:

Business Wire)

By partnering with Meta, Snowflake is providing customers with

easy, efficient, and trusted ways to seamlessly access, fine-tune,

and deploy Meta’s newest models in the AI Data Cloud, with a

comprehensive approach to trust and safety built-in at the

foundational level.

“Snowflake’s world-class AI Research Team is blazing a trail for

how enterprises and the open source community can harness

state-of-the-art open models like Llama 3.1 405B for inference and

fine-tuning in a way that maximizes efficiency,” said Vivek

Raghunathan, VP of AI Engineering, Snowflake. “We’re not just

bringing Meta’s cutting-edge models directly to our customers

through Snowflake Cortex AI. We’re arming enterprises and the AI

community with new research and open source code that supports 128K

context windows, multi-node inference, pipeline parallelism, 8-bit

floating point quantization, and more to advance AI for the broader

ecosystem.”

Snowflake’s Industry-Leading AI Research Team Unlocks the

Fastest, Most Memory Efficient Open Source Inference and

Fine-Tuning

Snowflake’s AI Research Team continues to push the boundaries of

open source innovations through its regular contributions to the AI

community and transparency around how it is building cutting-edge

LLM technologies. In tandem with the launch of Llama 3.1 405B,

Snowflake’s AI Research Team is now open sourcing its Massive

LLM Inference and Fine-Tuning System

Optimization Stack in collaboration with DeepSpeed,

Hugging Face, vLLM, and the broader AI

community. This breakthrough establishes a new

state-of-the-art for open source inference and fine-tuning systems

for multi-hundred billion parameter models.

Massive model scale and memory requirements pose significant

challenges for users aiming to achieve low-latency inference for

real-time use cases, high throughput for cost effectiveness, and

long context support for various enterprise-grade generative AI use

cases. The memory requirements of storing model and activation

states also make fine-tuning extremely challenging, with the large

GPU clusters required to fit the model states for training often

inaccessible to data scientists.

Snowflake’s Massive LLM Inference and Fine-Tuning System

Optimization Stack addresses these challenges. By using advanced

parallelism techniques and memory optimizations, Snowflake enables

fast and efficient AI processing, without needing complex and

expensive infrastructure. For Llama 3.1 405B, Snowflake’s system

stack delivers real-time, high-throughput performance on just a

single GPU node and supports a massive 128k context windows across

multi-node setups. This flexibility extends to both next-generation

and legacy hardware, making it accessible to a broader range of

businesses. Moreover, data scientists can fine-tune Llama 3.1 405B

using mixed precision techniques on fewer GPUs, eliminating the

need for large GPU clusters. As a result, organizations can adapt

and deploy powerful enterprise-grade generative AI applications

easily, efficiently, and safely.

Snowflake’s AI Research Team has also developed optimized

infrastructure for fine-tuning inclusive of model distillation,

safety guardrails, retrieval augmented generation (RAG), and

synthetic data generation so that enterprises can easily get

started with these use cases within Cortex AI.

Snowflake Cortex AI Furthers Commitment to Delivering

Trustworthy, Responsible AI

AI safety is of the utmost importance to Snowflake and its

customers. As a result, Snowflake is making Snowflake Cortex

Guard generally available to further safeguard against harmful

content for any LLM application or asset built in Cortex AI —

either using Meta's latest models, or the LLMs available from other

leading providers including AI21 Labs, Google,

Mistral AI, Reka, and Snowflake itself. Cortex

Guard leverages Meta’s Llama Guard 2, further unlocking trusted AI

for enterprises so they can ensure that the models they’re using

are safe.

Comments on the News from Snowflake Customers and

Partners

“As a leader in the hospitality industry, we rely on generative

AI to deeply understand and quantify key topics within our Voice of

the Customer platform. Gaining access to Meta’s industry-leading

Llama models within Snowflake Cortex AI empowers us to further talk

to our data, and glean the necessary insights we need to move the

needle for our business,” said Dave Lindley, Sr. Director of Data

Products, E15 Group. “We’re looking forward to fine-tuning

and testing Llama to drive real-time action in our operations based

on live guest feedback."

“Safety and trust are a business imperative when it comes to

harnessing generative AI, and Snowflake provides us with the

assurances we need to innovate and leverage industry-leading large

language models at scale,” said Ryan Klapper, an AI leader at

Hakkoda. “The powerful combination of Meta’s Llama models

within Snowflake Cortex AI unlocks even more opportunities for us

to service internal RAG-based applications. These applications

empower our stakeholders to interact seamlessly with comprehensive

internal knowledge bases, ensuring they have access to accurate and

relevant information whenever needed.”

“By harnessing Meta’s Llama models within Snowflake Cortex AI,

we're giving our customers access to the latest open source LLMs,"

said Matthew Scullion, Matillion CEO and co-founder. “The

upcoming addition of Llama 3.1 gives our team and users even more

choice and flexibility to access the large language models that

suit use cases best, and stay on the cutting-edge of AI innovation.

Llama 3.1 within Snowflake Cortex AI will be immediately available

with Matillion on Snowflake's launch day."

“As a leader in the customer engagement and customer data

platform space, Twilio's customers need access to the right data to

create the right message for the right audience at the right time,”

said Kevin Niparko VP, Product and Technology Strategy, Twilio

Segment. “The ability to choose the right model for their use

case within Snowflake Cortex AI empowers our joint customers to

generate AI-driven, intelligent insights and easily activate them

in downstream tools. In an era of rapid evolution, businesses need

to iterate quickly on unified data sets to drive the best

outcomes.”

Learn More:

- For enterprises interested in distilling Llama 3.1 405B for

their domain-specific use cases and getting additional support from

Snowflake’s AI Research Team, fill out this form.

- More details on how to get started with Llama 3.1 405B and

Snowflake Cortex AI can be found in this quickstart guide.

- Double click into the various ways developers can harness Llama

3.1 405B within Snowflake Cortex AI in this blog post.

- Dive into the technical details of how Snowflake’s AI Research

Team is enabling efficient and cost-effective inference, alongside

the fine-tuning of massive multi-hundred billion parameter

models.

- Learn more about the continued innovation coming out of

Snowflake’s AI Research Team, and meet the experts driving the

future of AI forward in the AI Research hub.

- Stay on top of the latest news and announcements from Snowflake

on LinkedIn and Twitter / X.

Forward Looking Statements

This press release contains express and implied forward-looking

statements, including statements regarding (i) Snowflake’s business

strategy, (ii) Snowflake’s products, services, and technology

offerings, including those that are under development or not

generally available, (iii) market growth, trends, and competitive

considerations, and (iv) the integration, interoperability, and

availability of Snowflake’s products with and on third-party

platforms. These forward-looking statements are subject to a number

of risks, uncertainties and assumptions, including those described

under the heading “Risk Factors” and elsewhere in the Quarterly

Reports on Form 10-Q and the Annual Reports on Form 10-K that

Snowflake files with the Securities and Exchange Commission. In

light of these risks, uncertainties, and assumptions, actual

results could differ materially and adversely from those

anticipated or implied in the forward-looking statements. As a

result, you should not rely on any forward-looking statements as

predictions of future events.

© 2024 Snowflake Inc. All rights reserved. Snowflake, the

Snowflake logo, and all other Snowflake product, feature and

service names mentioned herein are registered trademarks or

trademarks of Snowflake Inc. in the United States and other

countries. All other brand names or logos mentioned or used herein

are for identification purposes only and may be the trademarks of

their respective holder(s). Snowflake may not be associated with,

or be sponsored or endorsed by, any such holder(s).

About Snowflake

Snowflake makes enterprise AI easy, efficient and trusted.

Thousands of companies around the globe, including hundreds of the

world’s largest, use Snowflake’s AI Data Cloud to share data, build

applications, and power their business with AI. The era of

enterprise AI is here. Learn more at snowflake.com (NYSE:

SNOW).

View source

version on businesswire.com: https://www.businesswire.com/news/home/20240723098720/en/

Kaitlyn Hopkins Senior Product PR Lead, Snowflake

press@snowflake.com

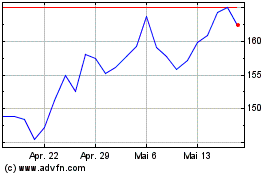

Snowflake (NYSE:SNOW)

Historical Stock Chart

Von Sep 2024 bis Okt 2024

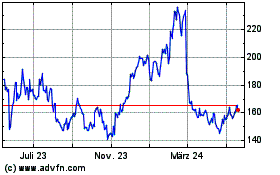

Snowflake (NYSE:SNOW)

Historical Stock Chart

Von Okt 2023 bis Okt 2024