Elastic Security Labs Releases Guidance to Avoid LLM Risks and Abuses

06 Mai 2024 - 8:00PM

Business Wire

Product controls and SOC countermeasures to

securely adopt LLMs

Elastic (NYSE: ESTC), the leading Search AI company, announced

LLM Safety Assessment: The Definitive Guide on Avoiding Risk and

Abuses, the latest research issued by Elastic Security Labs. The

LLM Safety Assessment explores large language model (LLM) safety

and provides attack mitigation best practices and suggested

countermeasures for LLM abuses.

Generative AI and LLM implementations have become widely adopted

over the past 18 months, with some companies pushing to implement

them as quickly as possible. This has expanded the attack surface

and left developers and security teams without clear guidance on

how to adopt emerging LLM technology safely.

“For all their potential, broad LLM adoption has been met with

unease by enterprise leaders, seen as yet another doorway for

malicious actors to gain access to private information or a

foothold in their IT ecosystems,” said Jake King, head of threat

and security intelligence at Elastic. “Publishing open

detection engineering content is in Elastic’s DNA. Security

knowledge should be for everyone—safety is in numbers. We hope that

all organizations, whether Elastic customers or not, can take

advantage of these new rules and guidance.”

The LLM Safety Assessment builds and expands on the Open Web

Application Security Project (OWASP) research focused on the most

common LLM attack techniques. The research includes crucial

information security teams can use to protect their LLM

implementations, including in-depth explanations of risks, best

practices and suggested countermeasures to mitigate attacks. The

countermeasures explored in the research cover different areas of

the enterprise architecture — primarily in-product controls — that

developers should adopt when building LLM-enabled applications and

information security measures SOCs must add to verify and validate

the secure usage of LLMs.

In addition to 1000+ detection rules already published and

maintained on GitHub, Elastic Security Labs added an initial set of

detections just for LLM abuses. These new rules are an example of

the out-of-box detection rules now included to detect LLM

abuses.

“Normalizing and standardizing how data is ingested and analyzed

makes the industry safer for everyone — which is exactly what this

research intends to do,” said King. “Our detection rule

repository helps customers monitor threats with confidence, as

quickly as possible, and now includes LLM implementations. The

rules are built and maintained publicly in alignment with Elastic’s

dedication to transparency.”

Additional Resources

- Blog: Elastic Advances LLM Security with Standardized Fields

and Integrations

- Explores the creation of integration workflows to reduce

friction when assessing LLM security and details a new integration

with AWS Bedrock

- Blog: Embedding Security in LLM Workflows: Elastic's Proactive

Approach

- Highlights suggestions and examples of how to detect malicious

LLM activities with ES|QL, and proposes a proxy based telemetry

solution.

- Blog: Accelerating Elastic detection tradecraft with LLMs

- Focuses on Elastic Security Labs’ dedication to LLM research in

the context of streamlining detection workflows with generative

AI

About Elastic

Elastic (NYSE: ESTC), the Search AI Company, enables everyone to

find the answers they need in real-time using all their data, at

scale. Elastic’s solutions for search, observability and security

are built on the Elastic Search AI Platform, the development

platform used by thousands of companies, including more than 50% of

the Fortune 500. Learn more at elastic.co.

Elastic and associated marks are trademarks or registered

trademarks of Elastic N.V. and its subsidiaries. All other company

and product names may be trademarks of their respective owners.

View source

version on businesswire.com: https://www.businesswire.com/news/home/20240506945825/en/

Candace Metoyer PR-team@elastic.co

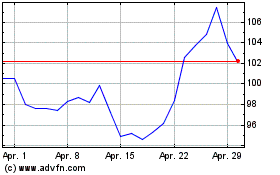

Elastic NV (NYSE:ESTC)

Historical Stock Chart

Von Apr 2024 bis Mai 2024

Elastic NV (NYSE:ESTC)

Historical Stock Chart

Von Mai 2023 bis Mai 2024