Powerful computer vision tech and natural

language models turn industry’s leading dataset into AV training

gold mine.

What’s New: Mobileye is sitting on a virtual treasure

trove of driving data – some 200 petabytes worth. When combined

with Mobileye’s state-of-the-art computer vision technology and

extremely capable natural language understanding (NLU) models, the

dataset can deliver thousands of results within seconds, even for

incidents that fall into the “long tail” of rare conditions and

scenarios. This helps the AV and state-of-the-art computer vision

system handle edge cases and thereby achieve the very high mean

time between failure (MTBF) rate targeted for self-driving

vehicles.

This press release features multimedia. View

the full release here:

https://www.businesswire.com/news/home/20220105005968/en/

Like all drivers, autonomous vehicles

will face a “long tail” of problems in which a self-driving vehicle

encounters something it has not seen or experienced before. An

example would be a tractor covered in snow, as shown here.

Mobileye’s state-of-the-art computer vision coupled with extremely

capable natural language models allows for hard mining of

Mobileye’s 200 petabytes of data, delivering thousands of results

within seconds, even for extremely rare conditions and scenarios.

(Credit: Mobileye, an Intel Company)

“Data and the infrastructure in place to harness it is the

hidden complexity of autonomous driving. Mobileye has spent 25

years collecting and analyzing what we believe to be the industry’s

leading database of real-world and simulated driving experience,

setting Mobileye apart by enabling highly capable AV solutions that

meet the high bar for mean time between failure.” ― Prof. Amnon

Shashua, Mobileye president and chief executive officer

How It Works: Mobileye’s database – believed to be the

world’s largest automotive dataset – comprises more than 200

petabytes of driving footage, equivalent to 16 million 1-minute

driving clips from 25 years of real-world driving. Those 200

petabytes are stored between Amazon Web Services (AWS) and

on-premise systems. The sheer size of Mobileye’s dataset makes the

company one of AWS’s largest customers by volume stored

globally.

Large-scale data labeling is at the heart of building powerful

computer vision engines needed for autonomous driving. Mobileye’s

rich and relevant dataset is annotated both automatically and

manually by a team of more than 2,500 specialized annotators. The

compute engine relies on 500,000 peak CPU cores at the AWS cloud to

crunch 50 million datasets monthly – the equivalent to 100

petabytes being processed every month related to 500,000 hours of

driving.

Why It Matters: Data is only valuable if you can make

sense of it and put it to use. This requires deep comprehension of

natural language along with state-of-the-art computer vision,

Mobileye’s long-standing strength.

Every AV player faces the “long tail” problem in which a

self-driving vehicle encounters something it has not seen or

experienced before. This long tail contains large datasets, but

many do not have the tools to effectively make sense of it.

Mobileye’s state-of-the-art computer vision technology combined

with extremely capable NLU models enable Mobileye to query the

dataset and return thousands of results within the long tail within

seconds. Mobileye can then use this to train its computer vision

system and make it even more capable. Mobileye’s approach

dramatically accelerates the development cycle.

What Is Included: Mobileye’s team uses an in-house search

engine database with millions of images, video clips and scenarios.

They include anything from “tractor covered in snow” to “traffic

light in low sun,” all collected by Mobileye and feeding its

algorithms. (See sample images).

More Context: With access to the industry’s

highest-quality data and the talent required to put it to use,

Mobileye’s driving policy can make sound, informed decisions

deterministically, an approach that removes the uncertainty of

artificial intelligence-based decisions and yields a statistically

high mean time between failure rate. At the same time, the dataset

hastens the development cycle to bring the lifesaving promise of AV

technology to reality more quickly.

Even More Context: Mobileye at CES 2022 | All

Mobileye/Autonomous Driving News

About Intel

Intel (Nasdaq: INTC) is an industry leader, creating

world-changing technology that enables global progress and enriches

lives. Inspired by Moore’s Law, we continuously work to advance the

design and manufacturing of semiconductors to help address our

customers’ greatest challenges. By embedding intelligence in the

cloud, network, edge and every kind of computing device, we unleash

the potential of data to transform business and society for the

better. To learn more about Intel’s innovations, go to

newsroom.intel.com and intel.com.

About Mobileye

Mobileye is leading the mobility revolution with its autonomous

driving and driver-assist technologies, harnessing world-renowned

expertise in computer vision, machine learning, mapping and data

analysis. Our technology enables self-driving vehicles and mobility

solutions, powers industry-leading advanced driver-assistance

systems and delivers valuable intelligence to optimize mobility

infrastructure. Mobileye pioneered such groundbreaking technologies

as True Redundancy™ sensing, REM™ crowdsourced mapping, and

Responsibility Sensitive Safety (RSS) technologies that are driving

the ADAS and AV fields toward the future of mobility. For more

information: www.mobileye.com.

Forward-Looking Statements

Statements in this media release that refer to future plans and

expectations are forward-looking statements that involve a number

of risks and uncertainties. Words such as “anticipates,” “expects,”

“intends,” “goals,” “plans,” “believes,” “seeks,” “estimates,”

“continues,” “may,” “will,” “would,” “should,” “could,” and

variations of such words and similar expressions are intended to

identify such forward-looking statements. Statements that refer to

or are based on estimates, forecasts, projections, uncertain events

or assumptions, including statements relating to future products

and technology and the availability and benefits of such products

and technology, expectations regarding customers, market

opportunity, and anticipated trends in our businesses or the

markets relevant to them, also identify forward-looking statements.

Such statements are based on current expectations and involve many

risks and uncertainties that could cause actual results to differ

materially from those expressed or implied in these forward-looking

statements. Important factors that could cause actual results to

differ materially are set forth in Intel’s SEC filings, including

the company’s most recent reports on Forms 10-K and 10-Q, which may

be obtained by visiting our Investor Relations website at

www.intc.com or the SEC’s website at www.sec.gov. Intel does not

undertake, and expressly disclaims any duty, to update any

statement made in this news release, whether as a result of new

information, new developments or otherwise, except to the extent

that disclosure may be required by law.

© Intel Corporation. Intel, the Intel logo and other Intel marks

are trademarks of Intel Corporation or its subsidiaries. Other

names and brands may be claimed as the property of others.

View source

version on businesswire.com: https://www.businesswire.com/news/home/20220105005968/en/

Robin Holt 1-503-616-1532 robin.holt@intel.com

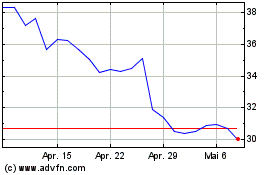

Intel (NASDAQ:INTC)

Historical Stock Chart

Von Mär 2024 bis Apr 2024

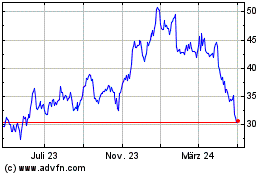

Intel (NASDAQ:INTC)

Historical Stock Chart

Von Apr 2023 bis Apr 2024